AMD Instinct MI250X vs NVIDIA A100 80GB PCIe for AI

A head-to-head comparison of specs, pricing, and real-world AI performance to help you pick the right hardware.

Disclosure: Some links on this page are affiliate links. We may earn a commission if you make a purchase — at no extra cost to you.

Quick Verdict

Both are excellent choices for AI. The AMD Instinct MI250X comes in at a lower price and offers strong performance. The NVIDIA A100 80GB PCIe justifies its premium with higher-end specs. Choose based on your budget and whether you need the extra headroom.

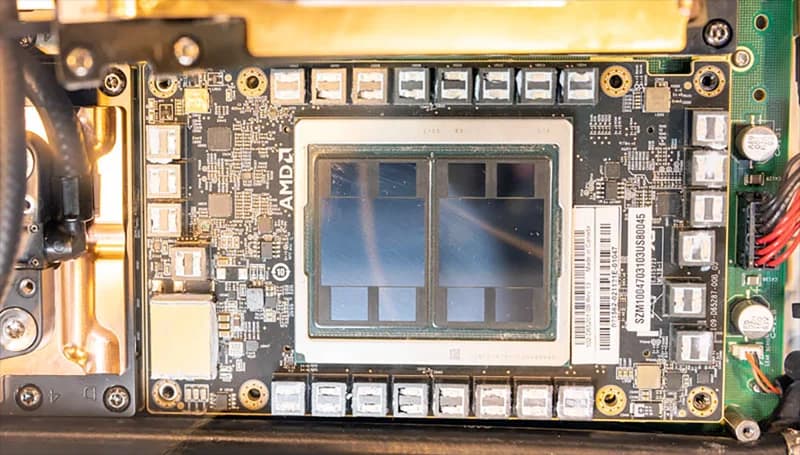

AMD Instinct MI250X

$8,000 – $11,000

AMD's flagship AI accelerator with 128GB HBM2e. A serious alternative to NVIDIA for large model training and inference workloads that need massive memory.

NVIDIA A100 80GB PCIe

$12,000 – $15,000

Enterprise-grade AI accelerator for large-scale training and inference. 80GB HBM2e memory runs the largest open-source models without quantization.

Specs Comparison

| Spec | AMD Instinct MI250X | NVIDIA A100 80GB PCIe |

|---|---|---|

| Price | $8,000 – $11,000 | $12,000 – $15,000 |

| VRAM | 128GB HBM2e | 80GB HBM2e |

| Compute Units | 220 CUs | — |

| Memory Bandwidth | 3,276 GB/s | 2,039 GB/s |

| TDP | 500W | 300W |

| Interface | PCIe 4.0 / OAM | PCIe 4.0 x16 |

| Tensor Cores | — | 432 (3rd Gen) |

AMD Instinct MI250X

Pros

- +Massive 128GB memory capacity

- +Incredible memory bandwidth

- +Growing ROCm software ecosystem

Cons

- -ROCm less mature than CUDA

- -Fewer community tutorials

- -Higher power consumption

NVIDIA A100 80GB PCIe

Pros

- +Industry-leading AI performance

- +80GB HBM2e for massive models

- +Multi-instance GPU (MIG) support

Cons

- -Very expensive upfront cost

- -Requires enterprise cooling

- -Overkill for small-scale operations

Where to Buy

Related Articles

comparison

AMD vs NVIDIA for AI: Which GPU Should You Buy in 2026?

A deep-dive comparison of AMD and NVIDIA GPUs for AI workloads in 2026 — ROCm vs CUDA software ecosystems, datacenter and consumer hardware head-to-head, price/performance analysis, and clear recommendations for every budget.

comparison

RTX PRO 5000 72GB vs RTX 5090: Which GPU for Local AI in 2026?

The NVIDIA RTX PRO 5000 72GB is now available — 72GB GDDR7 in a single desktop card. But at $7,000 vs the RTX 5090's $2,000, which makes more sense for local LLMs, agentic AI, and image generation? We break down VRAM math, inference benchmarks, and the real decision tree.

guide

NVIDIA RTX PRO 6000 96GB — Is It Worth It for Local AI in 2026?

The RTX PRO 6000 Blackwell packs 96GB GDDR7 ECC into a single desktop GPU at $4,599. We break down what models you can actually run, how it compares to the RTX 5090, RTX PRO 5000 72GB, A100 80GB, and Mac Studio M4 Max — and whether the price makes sense for local AI inference.