AMD Instinct MI250X vs NVIDIA H100 PCIe 80GB for AI

A head-to-head comparison of specs, pricing, and real-world AI performance to help you pick the right hardware.

Disclosure: Some links on this page are affiliate links. We may earn a commission if you make a purchase — at no extra cost to you.

Quick Verdict

Both are excellent choices for AI. The AMD Instinct MI250X comes in at a lower price and offers strong performance. The NVIDIA H100 PCIe 80GB justifies its premium with higher-end specs. Choose based on your budget and whether you need the extra headroom.

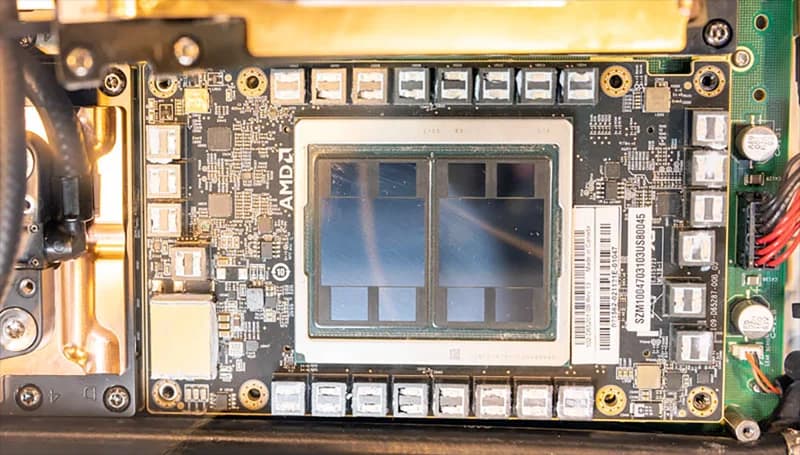

AMD Instinct MI250X

$8,000 – $11,000

AMD's flagship AI accelerator with 128GB HBM2e. A serious alternative to NVIDIA for large model training and inference workloads that need massive memory.

NVIDIA H100 PCIe 80GB

$25,000 – $33,000

NVIDIA H100 PCIe 80GB with HBM3 memory — the Hopper architecture GPU for AI training and inference. Transformer Engine with FP8 support delivers 3x the AI performance of A100. The standard for production LLM serving and model training.

Specs Comparison

| Spec | AMD Instinct MI250X | NVIDIA H100 PCIe 80GB |

|---|---|---|

| Price | $8,000 – $11,000 | $25,000 – $33,000 |

| VRAM | 128GB HBM2e | 80GB HBM3 |

| Compute Units | 220 CUs | — |

| Memory Bandwidth | 3,276 GB/s | 3,350 GB/s |

| TDP | 500W | 350W |

| Interface | PCIe 4.0 / OAM | PCIe 5.0 x16 |

| Tensor Cores | — | 528 (4th Gen) |

AMD Instinct MI250X

Pros

- +Massive 128GB memory capacity

- +Incredible memory bandwidth

- +Growing ROCm software ecosystem

Cons

- -ROCm less mature than CUDA

- -Fewer community tutorials

- -Higher power consumption

NVIDIA H100 PCIe 80GB

Pros

- +3x AI performance over A100

- +Transformer Engine for FP8 precision

- +Industry-standard for production AI

Cons

- -Extremely expensive ($25K+)

- -Requires enterprise infrastructure

- -Long lead times on orders

Where to Buy

Related Articles

comparison

AMD vs NVIDIA for AI: Which GPU Should You Buy in 2026?

A deep-dive comparison of AMD and NVIDIA GPUs for AI workloads in 2026 — ROCm vs CUDA software ecosystems, datacenter and consumer hardware head-to-head, price/performance analysis, and clear recommendations for every budget.

guide

DeepSeek V4-Flash Local Hardware Guide 2026 — What It Actually Takes to Run a 284B MIT-Licensed MoE

DeepSeek V4-Flash dropped April 24 under MIT license: 284B total / 13B active, 1M context, Claude Haiku-tier API pricing. Here's what hardware actually runs it locally — five priced buyer paths from $5,999 Mac Studio to $11K RTX PRO 6000, the 90 GB don't-bother cutoff, and why the MoE active-parameter math reframes every decision.

guide

Best AMD GPU for Local LLM Inference 2026 — A ROCm-First Buyer Guide (RX 7900 XTX, RX 9070 XT, Strix Halo, MI300X)

ROCm 7.2 finally fixed the AMD-for-AI software story. Here are the four AMD GPU buyer paths that matter in May 2026 — RX 7900 XTX at $899 for 24 GB, RX 9070 XT at $600 for the mid-range, Strix Halo unified memory at sub-$2,500, and MI300X / MI250X for self-hosted production — plus the explicit don't-buy cards.