Topic Hub

Complete Guide to Running LLMs Locally

Running LLMs locally gives you privacy, zero API costs, and full control over your AI stack. But choosing the right hardware matters: too little VRAM and your model won't load, too slow a GPU and inference crawls. This hub collects every guide, tutorial, and comparison you need to go from zero to running 70B+ parameter models on your own machine — covering GPU selection, quantization trade-offs, software setup with Ollama and llama.cpp, and real-world benchmark data from our testing.

Top Picks

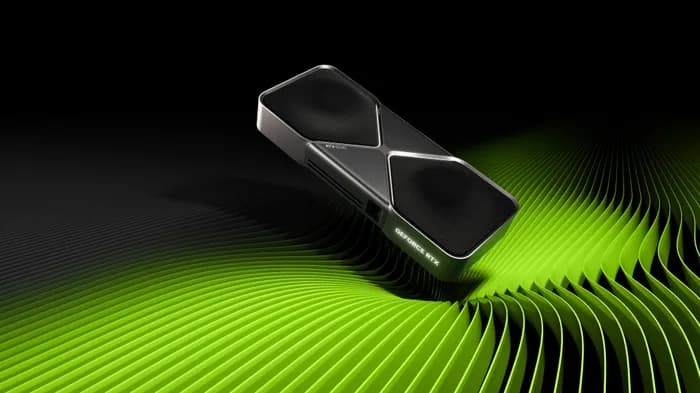

NVIDIA GeForce RTX 5090

$1,999 – $2,199

- VRAM: 32GB GDDR7

- CUDA Cores: 21,760

- Memory Bandwidth: 1,792 GB/s

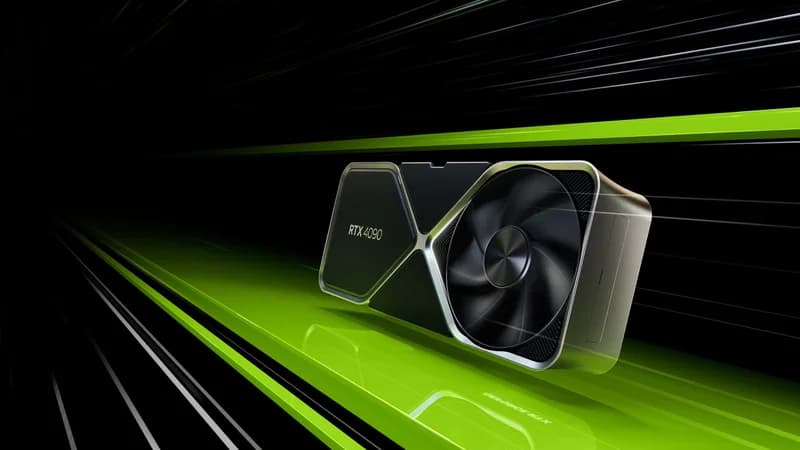

NVIDIA GeForce RTX 4090

$1,599 – $1,999

- VRAM: 24GB GDDR6X

- CUDA Cores: 16,384

- Memory Bandwidth: 1,008 GB/s

Apple Mac Mini M4 Pro

$1,399 – $1,599

- Chip: Apple M4 Pro

- CPU Cores: 12-core

- GPU Cores: 18-core

Related Articles

NVIDIA Nemotron 3 Nano Omni — Local Hardware Guide (2026)

NVIDIA's first frontier-class multimodal open model runs on a single 16GB GPU. Here's the complete hardware buyer's guide: VRAM math, GPU picks, Apple Silicon options, tok/s estimates, and a decision tree for Nemotron 3 Nano Omni in 2026.

ReadGuideDeepSeek V4-Flash Local Hardware Guide 2026 — What It Actually Takes to Run a 284B MIT-Licensed MoE

DeepSeek V4-Flash dropped April 24 under MIT license: 284B total / 13B active, 1M context, Claude Haiku-tier API pricing. Here's what hardware actually runs it locally — five priced buyer paths from $5,999 Mac Studio to $11K RTX PRO 6000, the 90 GB don't-bother cutoff, and why the MoE active-parameter math reframes every decision.

ReadGuideQwen 3.6-35B-A3B Local Hardware Guide 2026: The $800 GPU That Now Runs a Frontier MoE

Alibaba's Qwen 3.6-35B-A3B (released 2026-04-16, Apache 2.0) is the first frontier-class open coding model that runs usefully on a single used RTX 3090 — because only ~3B of its 35B parameters are active per token. Full quantization table, five priced buyer paths from $249 to $2,000, Mac Studio unified-memory coverage, and the MoE math that explains why an $800 GPU now keeps up.

ReadGuideQwen3-Coder-Next Local Hardware Guide 2026 — VRAM, GPU & Memory You Actually Need

Qwen3-Coder-Next is the first frontier coding model that's realistically local. 80B total / 3B active MoE, 256K context, 58.7% SWE-bench Verified — and it runs on a single RTX 5090 with 64GB of system RAM. Full VRAM math by quantization, buyer-tier builds from $1,500 to $10,000, Mac Studio coverage, and the agent-loop reality check no one else is writing.

ReadGuideQwen 3.5 Local Hardware Guide 2026: Every Model from 0.8B to 397B

Qwen 3.5 rewrites the local AI playbook with native multimodal, 262K context, and hybrid MoE. Here's exactly which GPU, Mac, or mini PC you need for every model size — with VRAM math, tok/s benchmarks, and price-tiered recommendations from $250 to enterprise.

ReadGuideHow Much RAM Do You Need for Local AI in 2026? System Memory Guide

32GB is the minimum, 64GB is recommended — but it depends on your models, your workflow, and whether you're on Apple Silicon. The definitive system RAM guide for running AI locally in 2026.

ReadTutorialHow to Use an Nvidia eGPU with Your Mac for Local AI in 2026

Apple just signed Tiny Corp's TinyGPU driver — the first official way to run Nvidia CUDA workloads on Apple Silicon Macs via external GPU. Here's the complete setup guide with GPU picks, enclosure recommendations, benchmarks, and step-by-step instructions for running local LLMs on your Mac + eGPU.

ReadGuideRunning Google Gemma 4 Locally: Complete Hardware Guide (2026)

Gemma 4 just dropped with four model sizes under Apache 2.0. Here's exactly which GPU, Mac, or edge device you need to run every variant locally — from the 2B edge model to 31B Dense — with VRAM tables, benchmarks, budget tiers, and setup instructions.

ReadGuideRTX 5060 for Local AI: Can NVIDIA's $299 GPU Actually Run LLMs in 2026?

The RTX 5060 brings Blackwell to $299 with 8GB GDDR7 — but is that enough VRAM for local AI? We test real LLM inference with Ollama, benchmark against the RTX 5060 Ti and Arc B580, and tell you exactly who should (and shouldn't) buy this GPU for AI workloads.

Read