Apple Mac Mini M4 Pro vs NVIDIA GeForce RTX 4060 Ti 16GB for AI

A head-to-head comparison of specs, pricing, and real-world AI performance to help you pick the right hardware.

Disclosure: Some links on this page are affiliate links. We may earn a commission if you make a purchase — at no extra cost to you.

Quick Verdict

The Apple Mac Mini M4 Pro is the better performer but costs more. Choose it if you need top-tier AI performance and can justify the price premium. The NVIDIA GeForce RTX 4060 Ti 16GB delivers solid value at a lower price point and is the smarter pick for budget-conscious buyers.

Apple Mac Mini M4 Pro

$1,399 – $1,599

Silent, compact desktop with 18-core GPU and unified memory. Ideal for running local LLMs and AI agents with zero fan noise and macOS simplicity.

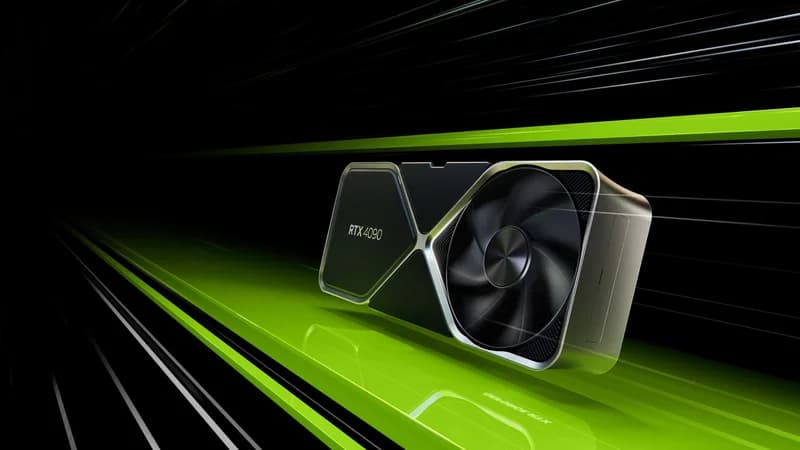

NVIDIA GeForce RTX 4060 Ti 16GB

$399 – $449

The balanced mid-range AI GPU. 16GB GDDR6 with Ada Lovelace 4th-gen tensor cores at under $450 — handles 13B models comfortably and runs Stable Diffusion XL with room to spare.

Specs Comparison

| Spec | Apple Mac Mini M4 Pro | NVIDIA GeForce RTX 4060 Ti 16GB |

|---|---|---|

| Price | $1,399 – $1,599 | $399 – $449 |

| Chip | Apple M4 Pro | — |

| CPU Cores | 12-core | — |

| GPU Cores | 18-core | — |

| Unified Memory | 24GB | — |

| Storage | 512GB SSD | — |

| VRAM | — | 16GB GDDR6 |

| Memory Bandwidth | — | 288 GB/s |

| CUDA Cores | — | 4,352 |

| Tensor Cores | — | 4th Gen |

| TDP | — | 160W |

Apple Mac Mini M4 Pro

Pros

- +Completely silent operation

- +Excellent single-thread AI inference

- +macOS ecosystem with Homebrew & Ollama

Cons

- -Limited to 24GB unified memory

- -No CUDA — limited ML framework support

- -Not expandable after purchase

NVIDIA GeForce RTX 4060 Ti 16GB

Pros

- +16GB VRAM for 13B models and Stable Diffusion XL

- +Full CUDA support — works with every AI tool

- +Power-efficient 160W TDP

Cons

- -Narrow 128-bit bus limits inference speed vs bandwidth-optimized cards

- -16GB ceiling limits 30B+ models

- -RTX 5060 Ti is now comparable at lower price

Where to Buy

Related Articles

comparison

Mac Mini M4 for AI: Is Apple Silicon Worth It in 2026?

A deep look at the Mac Mini M4 and M4 Pro for running local LLMs, AI agents, and inference workloads. Benchmarks, cost analysis, power efficiency, and an honest comparison with NVIDIA GPU rigs.

comparison

Best Mac Mini Alternatives for AI in 2026

The Mac Mini is a great compact machine, but it's not the only game in town for local AI. We compare the best mini PCs that offer CUDA support, upgradeable RAM, and Linux compatibility for running LLMs and AI workloads in a small form factor.

guide

Best Mini PC for Running LLMs Under $800 in 2026

You don't need a $3,000 GPU rig to run large language models locally. We tested five mini PCs under $800 that can handle 7B–34B parameter models via CPU inference — here are the best picks for budget local AI.