NVIDIA A100 80GB PCIe vs NVIDIA GeForce RTX 4090 for AI

A head-to-head comparison of specs, pricing, and real-world AI performance to help you pick the right hardware.

Disclosure: Some links on this page are affiliate links. We may earn a commission if you make a purchase — at no extra cost to you.

Quick Verdict

Both the NVIDIA A100 80GB PCIe and NVIDIA GeForce RTX 4090 are strong contenders for AI workloads. Your choice should come down to specific workload requirements, budget, and ecosystem preferences. Check the specs comparison below to find the best fit.

NVIDIA A100 80GB PCIe

$12,000 – $15,000

Enterprise-grade AI accelerator for large-scale training and inference. 80GB HBM2e memory runs the largest open-source models without quantization.

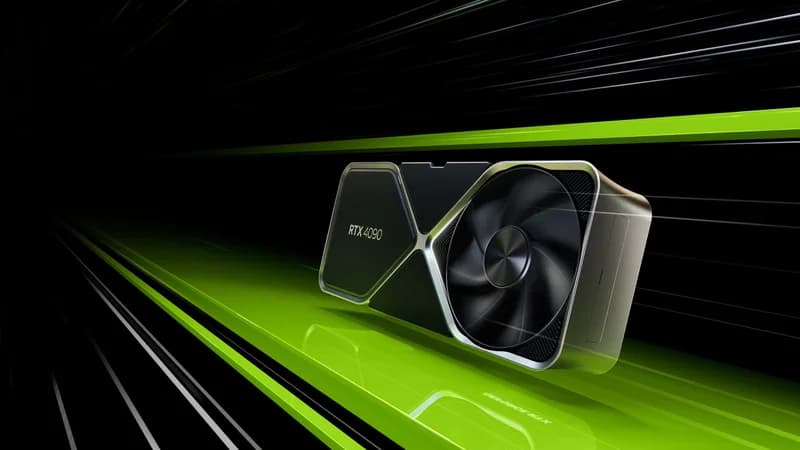

NVIDIA GeForce RTX 4090

$1,599 – $1,999

The best consumer GPU for AI. 24GB GDDR6X with 16,384 CUDA cores handles 70B+ parameter models locally — the go-to choice for serious AI workstations and local LLM setups.

Specs Comparison

| Spec | NVIDIA A100 80GB PCIe | NVIDIA GeForce RTX 4090 |

|---|---|---|

| Price | $12,000 – $15,000 | $1,599 – $1,999 |

| VRAM | 80GB HBM2e | 24GB GDDR6X |

| Tensor Cores | 432 (3rd Gen) | — |

| Memory Bandwidth | 2,039 GB/s | 1,008 GB/s |

| TDP | 300W | 450W |

| Interface | PCIe 4.0 x16 | PCIe 4.0 x16 |

| CUDA Cores | — | 16,384 |

NVIDIA A100 80GB PCIe

Pros

- +Industry-leading AI performance

- +80GB HBM2e for massive models

- +Multi-instance GPU (MIG) support

Cons

- -Very expensive upfront cost

- -Requires enterprise cooling

- -Overkill for small-scale operations

NVIDIA GeForce RTX 4090

Pros

- +Proven workhorse for AI inference

- +Excellent VRAM capacity for most models

- +Strong community support and documentation

Cons

- -High power consumption

- -Premium pricing

- -Previous-gen Ada Lovelace architecture

Where to Buy

Related Articles

guide

Best GPU for AI in 2026: Complete Buyer's Guide (Tested & Ranked)

We benchmarked every major GPU for AI inference, training, and image generation. RTX 5090, RTX 4090, RTX 3090, A100, H100, and MI300X — ranked with real-world tokens/sec data, VRAM analysis, and price/performance ratios for every budget.

comparison

AMD vs NVIDIA for AI: Which GPU Should You Buy in 2026?

A deep-dive comparison of AMD and NVIDIA GPUs for AI workloads in 2026 — ROCm vs CUDA software ecosystems, datacenter and consumer hardware head-to-head, price/performance analysis, and clear recommendations for every budget.

guide

How Much VRAM Do You Need for AI in 2026?

A practical guide to GPU memory requirements for every AI workload — LLM inference, training, image generation, and video. Includes a complete VRAM lookup table by model and quantization level, plus hardware recommendations.